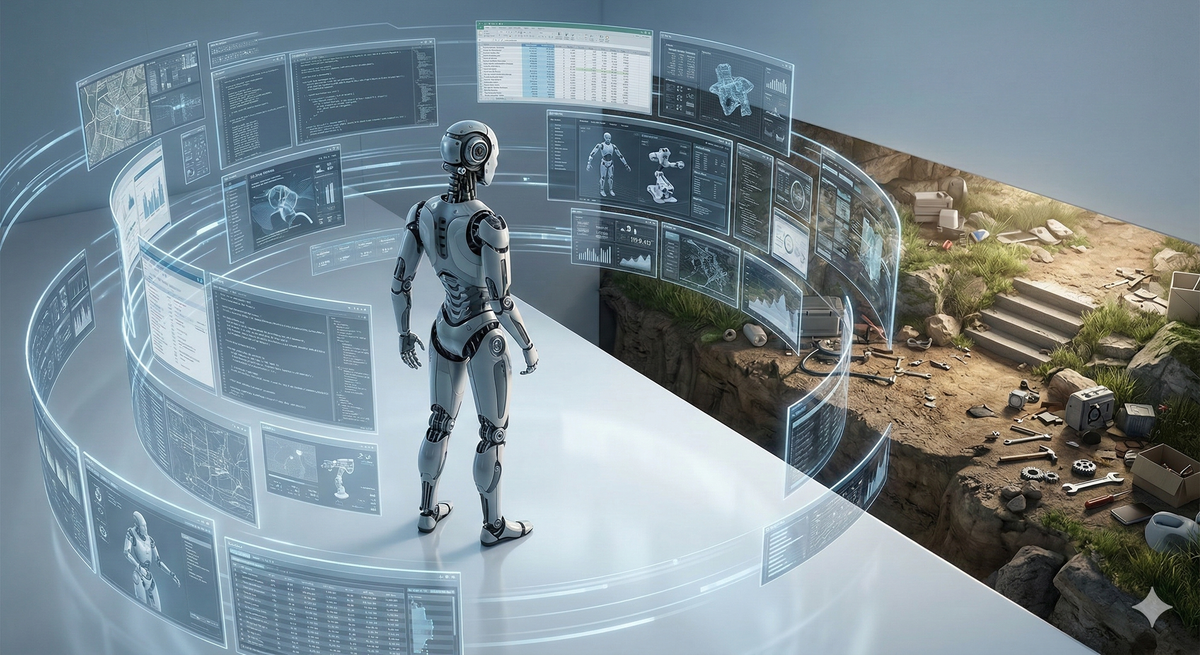

AI not embodied is forever hollow and never general

If AGI is tested only on digital work, it will pass while remaining hollow. Intelligence without embodied experience is too narrow to be truly general. A better test is whether an AI agent can teleoperate robots across the physical world as well as a human.

My boyhood dreams and fantasies were always physical; space travel, Formula One victory, breathtaking goals or Amazonian exploration. Undoubtedly I drew hugely on what I read as well as the games we played, but there’s a reason we speak admiringly of works and performances that ‘bring the story to life’. When a story is believable it becomes more powerful and vivid in our imaginations. We can assimilate it into our world view and creatively build upon it. My intelligence is borne largely out of my experience, and the most powerful experiences, even those I read or heard, exist in my memory with shape, texture, smell and vibrancy. Although I am completely blind, my internal world is not flat or monochrome. On the one occasion I dreamt of proving a hitherto unproven mathematical theorem, I wrestled that beast to the ground in a Middle Earth labyrinth, then emerged triumphant into sunlight, birdsong and springtime fragrance.

Back to reality, AI progress over the last 12 months raises many questions about the nature of intelligence and how closely Artificial General Intelligence can and should mirror human intelligence.

Most current definitions of AGI implicitly make embodiment optional by focusing on a broad range of cognitive tasks which can be performed and tested by interaction with digital content and digital tools through a computer user interface (e.g. a keyboard and mouse).

The restriction to digital work is historically understandable. Robot sensors, manipulators and mobility in real world terrain were until recently insufficient for a broad set of human tasks.

So the definition of AGI side stepped this limitation by focusing on digital tasks.

That’s all fine, but no amount of spreadsheet wizardry, software engineering or legal analysis maketh the human. Just as an example, the absence of parenthood, let alone childbirth, in my own lived experience leaves a big hole in my intelligence, particularly on the empathy front (cue vigorous nodding and wry smiles – you know who you are).

If we do not somehow test embodied work, AI will pass the AGI test without any capacity to understand, experience or navigate the physical world. And without that grounding AGI will be hollow and too narrow to be intelligent in a human sort of way.

However, with a modest extension to AGI definition, it becomes clear that true AGI disrupts much, much more than white collar jobs.

As background to my proposed addition, over the next 5 years massive deployment of robots across many sectors will initially be teleoperated by humans. Teleoperation is partly for safety reasons but partly to help the robots learn how humans navigate and manipulate the real world themselves. This teleoperation happens through a remote computer screen, keyboard, mouse and joystick. The latest Computer Use Agents can drive that same interface by simulating human mouse clicks, keystrokes or joystick movement. So, rather than either brushing embodiment under the carpet, or endlessly debating what constitutes an acceptable set of sensors and actuators for an embodiment test, we should simply extend the definition of AGI to essentially say that AGI (acting through a Computer Use agent) must be able to teleoperate any robot as successfully as a human can teleoperate it.

This short document contextualises and formalises the above addition to the test for AGI. Feedback is very welcome and do feel free to share or adopt this formulation when discussing AGI.