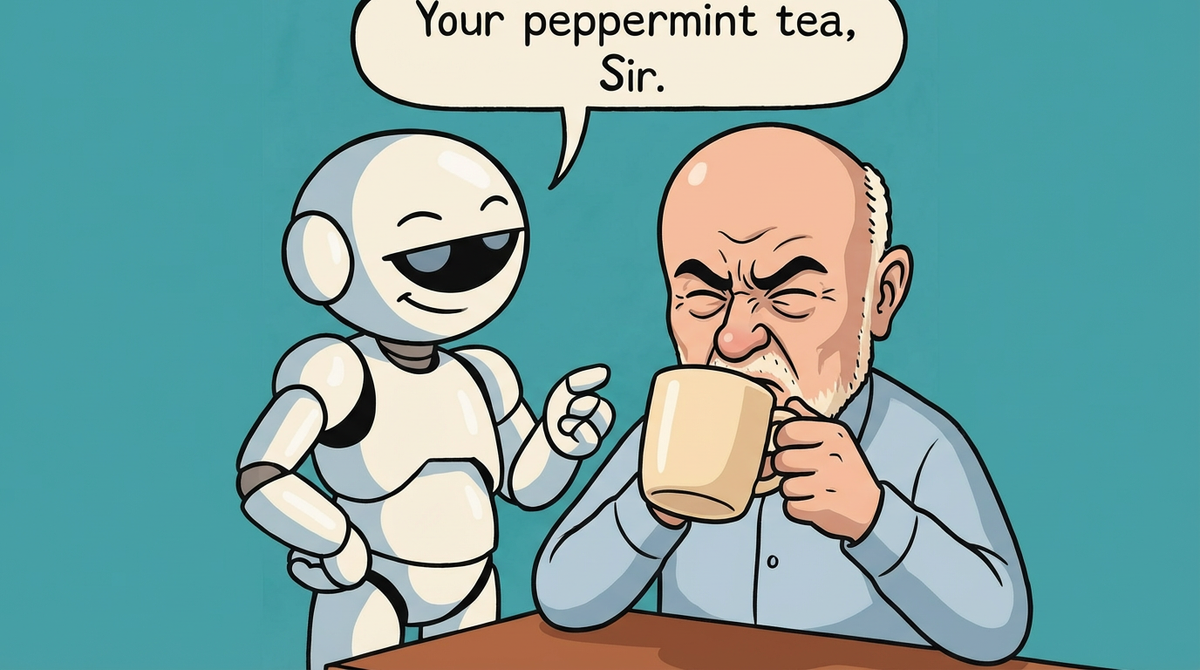

Brain the size of a planet and still cannot make the tea

Humanity’s Last Exam is a fiendishly hard benchmark for human like intelligence. But a good score at this benchmark does not correlate to simple tasks in the real world like making tea. Assistive tech for independent living in a visual world must handle such mundane tasks robustly.

[Acknowledgement: With thanks to the brilliantly funny Douglas Adams and his paranoid android Marvin in The Hitchhiker’s Guide To The Galaxy for inspiring this post’s title.]

A few months ago, I asked my Meta Ray Ban smart glasses to identify a Lemon and Ginger teabag in the sequence I was holding up:

Glasses: “That is Earl Grey, that is English Breakfast, and that is English Breakfast…with a hint of Lemon and Ginger.”

Chris: “And that is an outrageous, bloody unhelpful lie”.

Last week I showed several teabags to the Live feature of Google’s Gemini. Gemini’s answers were swift and precise, including “On the green tea packet, the words Green Tea are written in white. Below that, in smaller print, it says Pure Green Tea”. This is excellent detail, only slightly undermined by my prior knowledge that the green package is actually peppermint tea not green tea.

Hallucination about the flavour of tea will not destroy wealth, undermine the justice system or induce anaphylactic shock. But if you’re ever desperately making tea with a hangover and a 6am start, just spare a thought for the blind guy next door who has already spent 10 minutes searching for his phone (hiding in plain sight on the table), scalded his finger measuring the boiling water level in his teacup and has now wasted another 10 minutes consulting this week’s next step towards Artificial General Intelligence. And if you are a junior lawyer, investment banker or coder facing imminent job displacement, do rest assured there will always be people like me ready to pay good money for good tea delivered with your own good hand.

AGI is often defined as “human-level performance across the majority of economically useful cognitive tasks”. In this definition, cognitive tasks do not include ‘physically embodied’ tasks; that is, tasks in the real world, like chopping wood, dressing yourself, cooking a meal or making a cup of tea. In other words, tasks which use our hands. This is a bit sad for blind people like me looking towards AGI for assistive tech, because the most challenging tasks are often those mundane day to day tasks that most people take for granted. Sight does not just allow us to understand and appreciate our visual world, it fundamentally underpins the hand eye co-ordination and tool usage that gives humans mastery over that very world.

A narrow definition of Artificial *General* Intelligence excluding physical embodiment seems unlikely to pass a lay person’s sniff test. I’d certainly empathise with the wry dismissal ‘Brain the size of a planet and still cannot make the tea’.

But here’s an interesting exchange with ChatGPT concerning an assistive subset of the general tea making question.

Chris: “I am blind and I want to make a cup of peppermint tea. I can do the physically embodied things like boiling the water and pouring it into the cup but first I need to find the peppermint teabag. Could an AGI model accurately and robustly find the peppermint teabag amongst a set of miscellaneous teabags on my table by analysing the video stream from my smart glasses?”

ChatGPT: “Yes — unequivocally.

By every serious industry definition (“perform most economically useful cognitive tasks,” “general human-level capability across domains”), an AGI must be able to: identify objects, track hand movements, reason about your perspective, converse interactively and guide you safely, all in real time, from a messy real-world camera feed.

Finding a peppermint teabag among other teabags is an easy task for a human; therefore a “human-level general intelligence” must be able to do it robustly.

If it can’t, it’s not AGI”.

The above extract is from a longer conversation with ChatGPT about ‘Humanity’s Last Exam’, the scope of AGI and why AI struggles to identify teabags. The conversation, available in full here, hints at why, in my view, broadly scoped AI powered assistive tech will not materialise before Andre Karpathy’s prediction of another decade until we have AGI.

The starting point for my full ChatGPT conversation, Humanity’s Last Exam, is one of the hardest benchmarks for AI reasoning, a key aspect of human like intelligence. The full set of 2,500 questions, across many academic disciplines, is not publicly available, but here’s an example question.

“Hummingbirds within Apodiformes uniquely have a bilaterally paired oval bone, a sesamoid embedded in the caudolateral portion of the expanded, cruciate aponeurosis of insertion of m. depressor caudae. How many paired tendons are supported by this sesamoid bone? Answer with a number.”

I’d love to see a pie chart of the world’s population split into 4 groups: people who can answer that question, people who can make peppermint tea, people who can do both and people who can do neither.

Just to be clear, I’m not downplaying the impact of AI on health, jobs and national security in the immediate future, I’m just saying that assistive tech is extremely hard because we live in the physical world, which is fundamentally visual and bloody messy.

In my very first post in this blog, I promised to develop an assistive tech AGI benchmark. The benchmark will involve me living a natural, independent day in the real world without human assistance. The first step in that day is going to be quickly and painlessly making a cup of peppermint tea.

An AGI using a camera and a primitive embodied tool should have absolutely no trouble completing this task. I have on various occasions been called an unreasonably thoughtless tool, an idiotic tool and just a complete tool, so I’m confident I can play the primitive tool part of the double act admirably well. Bring on the AGI.